-

Workplace

Workplace

2024年六个人力资源趋势和预测

每一个新的一年都意味着我们将进入一个新的工作世界,各地的人力资源团队都有望保持领先地位。在人工智能变得强大到足以为我们预测未来之前,我们将使用原始的莱迪思研究,并与CPO 委员会的行业专家进行交谈,以总结 2024 年人力资源团队最重要的趋势和预测。

了解 2024 年将如何成为人力资源领域迄今为止最具影响力的一年。

1. 绩效和敬业度将是人力资源部的最高优先事项。

在提高绩效和打造良好工作场所之间犹豫不决?行业领先的人力资源专业人士比任何人都更清楚,成功的企业需要两者兼而有之。

事实上,我们的2024 年人才状况战略报告中绩效最高的人力资源团队更有可能将绩效和敬业度作为来年的首要任务。

支持这两项举措的理念是高度敬业的员工尽最大努力工作。(还记得悄悄辞职吗?那只是一年前的事了,公司仍然发现低敬业度会导致较差的业务成果。)有利于卓越绩效的环境需要明确的期望、灵活的工作政策、管理者的信任感以及定期认可——根据Lattice 和 YouGov 2023 年的一项调查,所有这些都有助于提高员工敬业度。

人力资源团队可以采取以下几项措施来帮助员工在 2024 年尽最大努力:

实施全公司范围的级联目标,帮助员工了解他们的工作如何与大局联系起来。

使用敬业度调查结果来确定公司需要人力资源部门关注和支持的领域。

让员工清楚地了解公司目标、成长机会和绩效期望。

下载我们的免费工作簿《人力资源平衡绩效与幸福感指南》。

2. DEIB 不会消失。

多样性、公平性、包容性和归属感 (DEIB) 仍将是全球人才团队关注的焦点。

尽管我们的2024 年人才状况战略报告发现 DEIB 在人力资源团队的优先事项清单中排名下降,但仍有希望:54% 的人力资源专业人士表示,他们的公司在过去两年中在 DEIB 计划和政策上投入了更多精力。

Greenhouse首席人力官Donald Knight表示:“当人力资源领导者适应人员的力量时,我们需要首先记住:趋势来来去去,但 DEIB 的核心依然稳固。” “虽然焦点可能会转移,但 DEIB 不仅仅是一个复选框,它是我们通往创造力、广阔视野和竞争优势的门户。”

不要让新的优先事项掩盖 DEIB 一直在稳步发展的努力。员工注意到并内化了不再强调 DEIB 的工作场所变化。当员工感到归属感减弱时,他们就很难做出有意义的贡献,而且他们更有可能离开,去寻找一个拥抱他们并赋予他们权力的工作场所。

奈特补充道:“让我们坚定不移地捍卫我们所知道的对人民和商业有益的事物,即使这不是人们谈论的话题。”

人员团队可以通过三种方式开始 2024 年并重新关注 DEIB:

下载我们的免费工作手册《如何在您的公司推出员工资源组》。

使用我们免费的多元化和包容性调查模板制定全年对 DEIB 员工进行调查的计划。

使用我们的分析仪表板密切关注内部流动数据。

3.人力资源正在加入人工智能运动。

人工智能席卷了 2023 年的新闻头条,这意味着最新人工智能技术的实施将在 2024 年成为人力资源领导者的考虑(并纳入他们的预算)。

“我所看到的每一个地方,都有关于人工智能的讨论,”奈特说。“但我要说的两点是:人工智能不是坏人。当偏见得到缓解时,它就会成为一个强大的盟友,随时准备帮助我们更加关注真正重要的事情——人力资源中的人际关系。”

人力资源部门并不害怕被人工智能取代:根据我们的2024 年人才状况战略报告,72% 的人力资源专业人士认为人工智能不会影响公司员工人数,65% 的人总体上并不担心他们的工作保障。事实上,正如 Knight 所描述的那样,人工智能已经在帮助人力资源部门让工作变得更有意义——76% 的人表示他们已经开始在工作场所讨论、探索或实施人工智能解决方案。

“无论我们是否做好准备,人工智能都是我们未来的一部分,”Exabeam 首席人力资源官 Gianna Driver 说道。“在人力资源领域,我们经常被视为技术的较晚采用者。现在是我们改变这种说法的时刻,让人工智能让我们的工作更加高效、更快、更好。”

当然,人工智能也伴随着严重的风险,比如算法偏差、知识产权侵权和网络安全漏洞。但Gartner 调查的68% 的人力资源高管表示,人工智能的好处大于风险。

这些风险可以而且应该由人力资源部门管理。制定员工使用政策、培训团队管理公司知识产权以及确保人类审查和编辑人工智能工具生成的任何内容仅仅是开始。

“人工智能使我们能够更好地完成工作,”德赖弗说。“因此,让人工智能做技术能做的事情,并专注于成为人类,为人力资源部门带来同理心和其他以人为本的技能。”

4. 赋予管理者领导能力将帮助企业走得更远。

人力资源团队严重依赖经理来掌握员工敬业度、为团队提供目标和记录进度,并充当员工对职业倦怠、薪酬等问题的眼睛和耳朵。

对于还必须平衡自己的角色和期望的管理者来说,这是一项艰巨的任务。到 2023 年,管理者比个人贡献者面临更高的倦怠风险,66% 的团队级经理报告存在一定程度或严重程度的倦怠。

但在管理者得到支持、授权并获得成功工具的组织中,“员工提供正净推荐分数的可能性高出 2.8 倍,将公司描述为高度创新的可能性高出 2.5 倍,将公司描述为高度创新的可能性高出 1.6 倍”。高度参与”,根据《麻省理工学院斯隆管理评论》发表的研究。

2024 年,经理赋能将成为实施人力资源规划以保持绩效和敬业度的关键。人力资源部可以采取以下四件事来帮助管理者充满信心地开始 2024 年:

建立更好的职业道路,以便现任管理者可以更有效地指导员工发展,而有抱负的管理者可以了解该职位是否适合他们。

提供有关情商、冲突解决、薪酬透明度和其他主题的 经理发展培训。

定期与经理分享资源,例如绩效评估指南、薪资谈判脚本以及撰写有意义的反馈的技巧。

5. 变革疲劳会影响员工的绩效。

变革疲劳 是员工对公司变革的普遍冷漠或顺从感,尤其是当短期内发生太多变革时。

裁员、收购、重组和其他变化在企业中是常有的事,但在过去的一年中尤为普遍,41% 的人力资源团队在我们的 2024 年人才状况战略报告中报告了其公司的裁员情况。在大多数情况下,这些困难的变化处理得不好:

59% 的人力资源专业人士表示,他们认为最高管理层未能提供足够的支持来解决裁员后员工士气低落的问题。

另外 62% 和 63% 的受访者表示,最高管理层没有为培训经理或重新定义角色提供足够的支持。

裁员并不是变革疲劳的唯一根源。组织重组、文化转型、重返办公室的变化以及遗留技术系统的更换也会极大地影响员工的日常生活和对其工作角色的理解。根据 Gartner 的数据,到 2022 年,员工平均会经历10 次计划中的企业变革,而 2016 年为 2 次。

Gartner 的研究表明,变革疲劳的影响不仅会损害员工士气,还会直接影响绩效、生产力和其他业务成果。当受访员工经历变革疲劳时,他们表示:

留下来的意愿降低 42%

信任度降低 30%

可持续绩效降低 27%

响应速度降低 27%

可自由支配的努力减少 22%

企业贡献减少17%

然而 Gartner 在另一项调查中还发现,只有20% 的人力资源领导者有能力发现变革疲劳,这意味着它必须成为 2024 年人员团队倾听策略和员工调查的关键部分。

HR 可以做的事情如下:

在公司实施 重大变革之前、期间和之后向高管传达变革疲劳的风险。

确保经理有能力通过提供谈话要点或安排全体会议来与团队讨论变革。

在任何重大变化之前和之后,请密切关注脉搏调查数据,以准确了解士气、参与度和绩效需求。

6. 员工期望高管有更多同理心。

内部沟通是公司文化的支柱,组织价值观通过领导层与员工的沟通方式得以具体化。

定期的沟通渠道——全体会议、全体会议以及员工和高管之间的定期会面时间——对于确保员工感到支持和倾听至关重要,但沟通的主题也同样重要。

2024 年,人力资源团队将必须关注新闻和世界事件,并帮助管理公司领导者以真实、有意义和真实的方式对这些事件做出反应。

安永会计师事务所对商业同理心的研究发现:

超过一半的员工 (52%) 认为他们的公司同情员工的努力是不诚实的。

大多数员工 (86%) 表示同理心领导有助于提高士气,而 87% 的员工认为同理心是创建包容性工作场所的关键部分。

为了保持凝聚力,人力资源团队需要帮助修复员工与高管的关系——而且要快。就是这样:

向高管证明士气低落和敬业度低会影响业务成果。

确定推动内部和外部沟通的组织价值观。

与最高管理层合作制定工作场所新闻和世界事件评论指南。

—

当然,2024年也将充满惊喜。与此同时,人力资源团队可以做的最好的准备就是调查员工的需求,并与公司领导层密切合作,确定未来的发展方向。哦,还有投资您的人力资源技术堆栈:这就是为什么您在 1 月 1 日之前需要更好的人力资源技术解决方案。

-

Workplace

Workplace

52%的一线员工因技术工具而辞职,揭开一线员工的真正期望

一线工人,如送货司机、医护人员和零售助理,在疫情期间一直是必不可少的。“疫情确实迫使人们认识到这些工人是多么重要......并真正让他们看到了曙光,”Meta公司的Workplace总监Christine Trodella说。

最终,令人鼓舞的是,在接受Meta调查的1350名最高管理层中,100%的人表示,从现在起,一线将是一个战略重点。而83%的人说,这场疫情确实让人看到了这一点。

重要的是,一线员工同意他们的形象一直在上升。29%的人现在觉得自己和办公室的同事一样重要,27%的人说他们只是因为疫情而受到重视,74%的人对自己的角色感到满意,而一年前只有47%。

然而,最高管理层和领导层对一线的认可程度很低--只有27%的最高管理层说前线在疫情之前一直是一个优先事项。正如Trodella所说,他们在历史上是 "看不见,摸不着"。

此外,在7000名一线员工中,只有超过一半(55%)的人告诉Workplace,他们感到与总部和领导层有联系。再加上每10个一线员工中就有7个在过去12个月中遭受或面临职业倦怠的风险,领导们手上有一个迫在眉睫的危机。

"我们已经看到了一些改善和来自最高管理层的良好意图,但我们仍然有很长的路要走,”Trodella指出。

揭开 "伟大的一线辞职"

雇主面临的一线员工的挑战的一个主要部分是所谓的 "伟大的一线辞职"。

这些员工不满意,准备用脚投票。57%的一线员工告诉Workplace,他们准备转到另一个薪水更高的一线岗位,而45%的人正在考虑全部离开一线。

缺乏学习和发展已经被作为 "大辞职 "趋势的一个普遍解释。因此,更令人担忧的是,43%的一线员工表示,他们在目前的组织中能学到的东西已经达到了极限。

很明显,领导者并没有埋头苦干,而是意识到了这种趋势。在70%的最高管理层中,几乎有十分之七的人预计会出现高减员率,但他们不确定如何应对这种情况。

只有25%的人说他们计划增加工资,而只有26%的人计划在发展中的学习方面进行更多的投资,五分之一的人实际上计划在下一年减少职业发展资金。

更好的沟通技术是阻止辞职的解决方案吗?

虽然公司重新思考他们对工资、福利和学习的态度是至关重要的,但Trodella指出,成功的吸引和保留需要公司关注工人的 "围绕透明度和信息流的真正期望"。一线工人希望能够获得与公司其他任何人一样的信息。

技术作为一种解决方案是很有帮助的,一线工人表示他们期望能像办公室的同事一样,获得高质量的技术。

94%的最高管理层承认他们传统上优先考虑办公室和基于桌面的技术。

这是一个问题,因为52%的一线工人告诉Workplace,获得工具是他们会转到新工作的原因,44%的一线工人认为基于办公桌的同事有更好的技术。

因此,很明显,投资于正确的工具,特别是良好的沟通技术,将有助于公司在持续的一线人员流失危机中取得成功。

适合一线的技术

下一个挑战是弄清楚一线工人想要和需要什么类型的技术。

虽然他们希望拥有与办公桌上的工作人员相同质量的技术--仅仅因为他们没有办公桌,并不意味着他们应该没有发言权--但他们所依赖的工具必须考虑到一线的情况,尤其要适合他们非朝九晚五的时间表。

"他们需要易于使用的技术,可以在移动设备上使用,更重要的是不需要培训,"Trodella解释说。

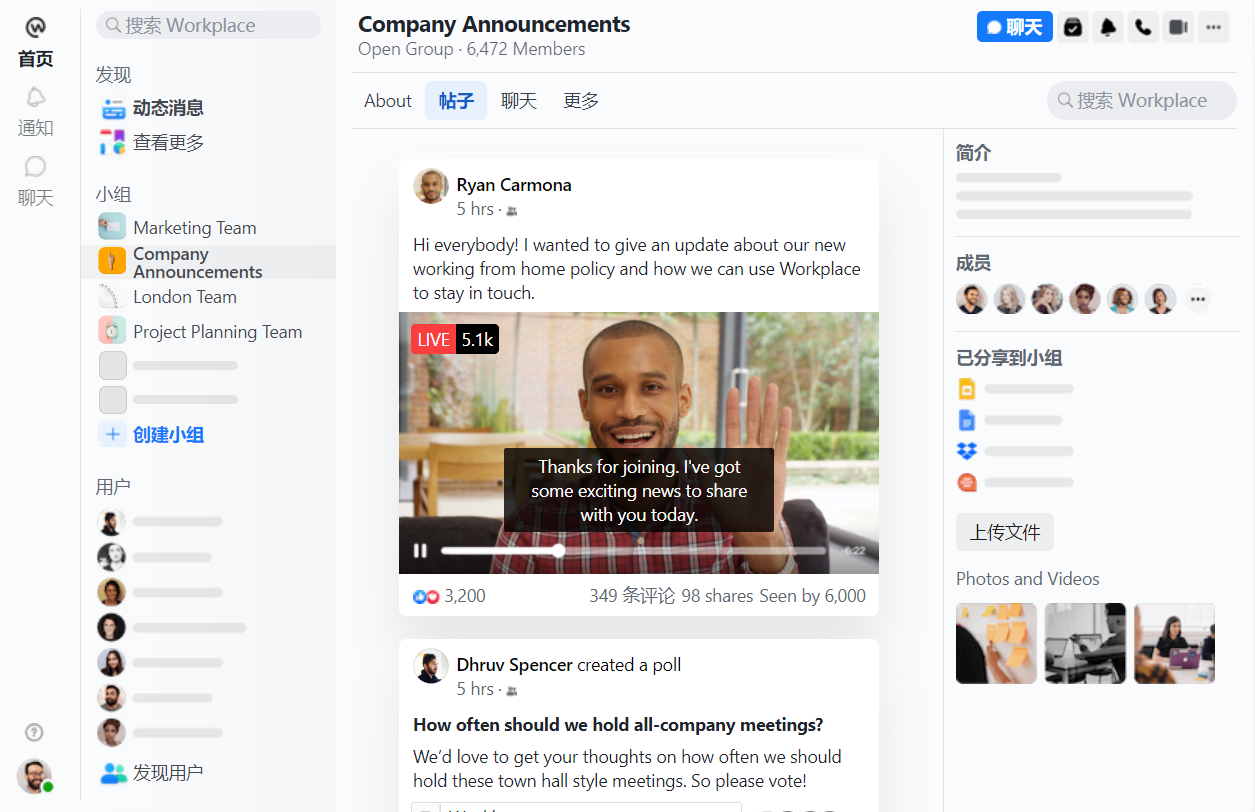

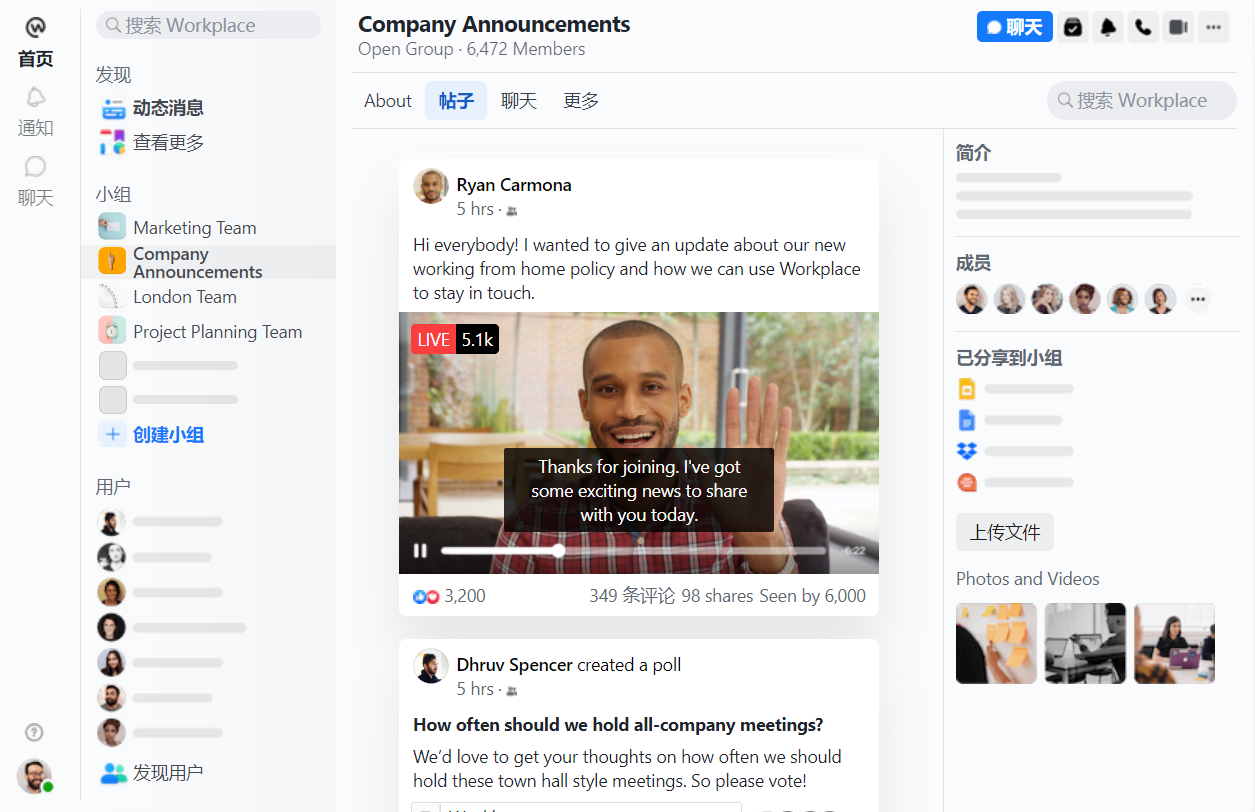

这正是Workplace试图提供的,它的平台以Facebook的消费者产品为蓝本,该平台现在还与另一款Facebook产品Whatsapp集成。

这有助于 "消除 "将技术送到一线工人手中的摩擦,Trodella补充道。

他们还希望技术能够实现双向对话,这将有助于推动工作中的信任和透明度。目前,四分之一的一线工人不相信他们的高级领导团队或他们的部门经理能保护他们的福利和幸福。

Trodella说,拥有技术只是其中的一部分,下一部分是 "使用技术的方式"。

Workplace的报告指出,领导者不应该与工人谈话,而是应该与他们沟通。Trodella解释说,"在疫情期间,我们的客户提供的一些最好的故事是他们的许多CEO会使用Workplace上线视频。”

阿斯利康和沃达丰都向UNLEASH讲述了他们在疫情期间如何利用这些功能,使员工在不在同一地点工作的情况下保持敬业度。

CEO视频会议使员工能够提出问题并向最高管理层提供反馈。这确实让人们感到与领导层有更多的联系,并创造了 "更容易接近的员工的最高管理层"。

"我们确实看到,当公司采用这种技术,并采用连接人们的行为时,他们会得到非常好的结果,”Trodella总结道。

现在是雇主为一线投资新技术标准的时候了,否则就有可能被那些把一线需求放在首位的竞争对手夺走人才。

作者:Allie Nawrat, UNLEASH

-

Workplace

Workplace

【德国】面向一线工人的协同沟通应用Flip完成了3000万美元A轮融资

世界上80%以上的劳动力,约27亿人,并不是整天呆在电脑前,这导致了在技术方面的强烈差异,大约1%的IT投资是针对他们的用户。随着智能手机和应用程序的兴起,这种情况正在迅速改变,今天,一个因这一趋势而业务蓬勃发展的初创公司宣布获得一些资金,以抓住这一机会。

Flip (https://www.flipapp.de/)公司为一线工人制作了一个通信应用程序,供他们相互聊天,从管理层获得通信,并进行换班等人力资源活动,该公司已经融资了3000万美元。这家初创公司--总部设在德国斯图加特,主要用于德语区DACH地区--将利用这笔资金打入新市场,首先是英国,首席执行官兼创始人Benedikt Ilg说。

Notion Ventures和位于柏林的HV Capital共同领导这一轮融资,之前的支持者是Cavalry Ventures和LEA Partners,还有包括大众汽车董事长Matthias Müller和其他许多人在内的个人支持者。

市场上有许多其他的应用程序,针对世界劳动力的同一领域,以及围绕通信的相同使用案例:

它们包括Meta的Workplace、微软的Teams、Crew(现在由Square拥有)、Blink、Yoobic、When I Work、Workstream以及更多。事实上,就在今天,另一个主要关注人力资源方面机会的一线工作应用Snapshift也宣布获得4500万美元融资。

但是,对于这个看起来很拥挤的领域,有三件事是值得一提的。

首先,这是一个足够大和分散的市场,可能会有几个强大的玩家长期参与其中。

第二,由于市场仍然相当新,每个参与者仍有许多方法可以发展和创新。(伊尔格说,对Flip来说,一个值得注意的领域是,他的应用程序严格遵守GDPR规则,这有助于它赢得业务,而其他公司可能声称他们也是如此,但实际上不是。)另一个方面则更为基本:使应用程序易于使用,不仅是在用户界面和体验方面,还有实际的应用程序大小,它在一个人的手机上所占用的空间以及它所需要的带宽。

"在创办Flip之前,我在Porche公司从事生产工作,我知道这种感觉,与管理层缺乏沟通,"Ilg说。"当涉及到下载和使用时,我们是该领域最简单的应用程序,屏幕数量最少。这就是我们想要建立的精神,为终端用户做事"。

第三,如果临界质量对成功很重要的话,Flip实际上正在跃居前列。

迄今为止,Flip已经积累了200个客户,横跨约100万用户,名单中包括麦当劳、Rossmann、Edeka、Magna和Mahle。伊尔格告诉我,在这场大流行中--一线工人突然出现在人们意识的前沿和中心--其收入蓬勃发展,在去年猛增六倍。(这家初创公司没有披露实际收入和估值)。

对于那些关注这个领域的人来说,你可能已经注意到了我们前几周写的一个故事,即工作场所一直希望宣布麦当劳成为其客户,但却一直拖着。Flip与该公司的合作目前共有约60,000人。鉴于这家快餐巨头已经公开与Flip合作......看看工作场所的交易是如何进行的,这将是一件有趣的事情。在任何情况下,它强调的是这里还有多少东西可以玩。

今天的应用程序主要是由雇主用来与他们更广泛的员工沟通,这些人在白天四处走动,通常不会在一个地方工作。这些人也使用该应用程序相互沟通,主要是为了提高工作效率:交换班次或检查工资单,但不是为了执行与他们的工作直接相关的功能。这是Yoobic等竞争对手一直在打造的功能领域,Ilg说Flip也开始研究如何可能。

"Flip为每个无办公桌的员工提供了一个机会,让他们真正参与到自己公司的沟通过程中。在积极整合这些员工方面有巨大的潜力。Notion Ventures的Jos White在一份声明中说:"随着Flip在英语市场的扩展,我们期待着用我们的专业知识和经验支持它。

创始人表示:在过去的一年里,我们已经发展成为欧洲领先的员工应用程序提供商之一。我们的营业额增加了六倍,员工人数增加了四倍。我们之所以能够做到这一点,主要是因为我们强大的团队、优秀的合作伙伴以及对运营员工需求的关注。

现在是下一步和新挑战的时候了。不仅我们对“赋能每一位员工”的愿景深信不疑,还有来自 HV Capital 和 Notion Capital 的新投资者积极支持我们。

来自TC 作者Ingrid Lunden

-

![]() Workplace

Workplace

如何利用People Analytics建立一个公平的工作场所

概要:自动化正向人力资源部门走来。通过自动收集和分析大型数据集,人工智能和其他分析工具有望改善人力资源管道的每个阶段,从招聘和薪酬到晋升、培训和评估。然而,这些系统可以反映历史偏见,并在种族、性别和阶级的基础上进行歧视。

管理者应该考虑到:

1)模型很可能对大多数人口群体中的个人表现最好,但对代表性较差的群体则更差;

2)不存在真正的 "种族盲 "或 "性别盲 "模型,从模型中明确省略种族或性别甚至会使情况更糟;

3)如果人口类别在你的组织中分布不均(在大多数情况下不是这样),即使精心建立的模型也不会导致不同群体的平等结果。

人力资本分析,将科学和统计方法应用于行为数据,可以追溯到弗雷德里克-温斯洛-泰勒1911年的经典著作《科学管理原理》,该书试图将工程方法应用于人员管理。但直到一个世纪后--在计算机能力、统计方法,特别是人工智能(AI)的进步之后--该领域的力量、深度和广泛的应用才真正爆发出来,特别是,但不仅仅是在人力资源(HR)管理方面。通过自动收集和分析大型数据集,人工智能和其他分析工具提供了改善人力资源管道每个阶段的承诺,从招聘和薪酬到晋升、培训和评估。

现在,算法正被用来帮助管理者衡量生产力,并在招聘、补偿、晋升和培训机会方面做出重要决定--所有这些都可能改变员工的生活。公司正在使用这种技术来识别和消除不同性别、种族或其他重要人口统计类别的薪酬差距。人力资源专业人士经常使用基于人工智能的工具来筛选简历,以节省时间,提高准确性,并发现与更好(或更差)的未来表现有关的隐藏的资格模式。基于人工智能的模型甚至可以用来建议哪些员工可能在不久的将来辞职。

然而,尽管人力资本分析工具有如此多的承诺,但它们也可能使管理者严重误入歧途。

亚马逊不得不扔掉一个由其工程师建立的简历筛选工具,因为它对女性有偏见。或者考虑一下LinkedIn,它被世界各地的专业人士用来建立网络和搜索工作,也被人力资源专业人士用来招聘。该平台的搜索栏的自动完成功能被发现建议用 "Stephen "这样的男性名字来代替 "Stephanie "这样的女性名字。

最后,在招聘方面,一个关于科学、技术、工程和数学(STEM)领域机会的社交媒体广告,被精心设计为性别中立,但在一个旨在使招聘者的广告预算价值最大化的算法中,男性被显示的比例过高,因为女性通常对广告反应更强烈,因此向她们显示的广告更昂贵。

在每一个例子中,分析过程中都出现了故障,并产生了无意的--有时是严重的--对某一特定群体的偏见。然而,这些故障可以而且必须被预防。为了实现基于人工智能的人力资本分析的潜力,公司必须了解算法偏见的根本原因,以及它们如何在常见的人力资本分析工具中发挥作用。

分析过程

数据并不是中立的。人力资本分析工具通常是建立在雇主对员工的招聘、保留、晋升和报酬的历史数据之上。这些数据总是反映了过去的决定和态度。因此,当我们试图建立未来的工作场所时,我们需要注意我们的回顾性数据如何反映旧的和现有的偏见,并可能无法完全捕捉到日益多样化的劳动力中人员管理的复杂性。

数据可能直接带有明确的偏见--例如,你公司的绩效评估可能在历史上对某个特定群体有偏见。多年来,你已经纠正了这个问题,但如果有偏见的评价被用来训练人工智能工具,算法将继承并传播偏见。

还有一些更微妙的偏见来源。例如,本科生的GPA可能被用作智力的代表,或者职业执照或证书可能是技能的一个衡量标准。然而,这些衡量标准是不完整的,往往包含偏见和扭曲。例如,在大学期间不得不工作的求职者--他们更有可能来自低收入背景--可能得到较低的成绩,但事实上他们可能是最好的求职者,因为他们已经表现出克服障碍的动力。了解你想测量的东西(如智力或学习能力)和你实际测量的东西(如学业考试成绩)之间的潜在不匹配,对建立任何人力资本分析工具都很重要,特别是当目标是建立一个更多样化的工作场所时。

一个人力资本分析工具的表现是它所提供的数据和它所使用的算法的产物。

在这里,我们提供了三条经验,你在管理你的员工时应该牢记在心。

首先,最大限度地提高预测的整体质量的模型--最常见的方法--很可能对大多数人口群体中的个人表现得最好,但对代表性较差的群体则较差。这是因为算法通常是最大化整体准确性,因此在确定算法的参数时,对多数人口的表现比对少数人口的表现有更大权重。一个例子可能是一个用于由大多数已婚或单身且无子女的人组成的劳动力的算法;该算法可能确定使用个人日的突然增加表明辞职的可能性很大,但这个结论可能不适用于那些因为孩子生病而需要时常休假的单亲父母。

第二,不存在真正的 "种族盲 "或 "性别盲 "模式。事实上,在一个模型中明确省略种族或性别,甚至会使事情变得更糟。

考虑一下这个例子。想象一下,你的基于人工智能的人力资本分析工具(你一直小心翼翼地避免提供性别信息)在预测哪些员工可能在被雇用后不久就辞职方面取得了良好的记录。你不确定该算法到底发现了什么--对用户来说,人工智能的功能经常像一个黑匣子--但你避免雇用被该算法标记为高风险的人,并看到新员工在加入后不久就辞职的人数有了明显的下降。然而,若干年后,你因在招聘过程中歧视女性而遭到诉讼。事实证明,该算法不成比例地筛选出了来自缺乏日托设施的特定邮政编码的妇女,给单身母亲带来了负担。如果你知道,你可能已经通过在工作附近提供日托服务来解决这个问题,不仅避免了诉讼,甚至使你在招聘这一地区的妇女时获得竞争优势。

第三,如果像性别和种族这样的人口统计学类别在你的组织中不成比例地分布,这是典型的情况--例如,如果过去大多数管理人员是男性,而大多数工人是女性--即使精心建立的模型也不会导致不同群体的平等结果。这是因为,在这个例子中,一个识别未来管理者的模型更有可能将女性错误地归类为不适合做管理者,而将男性错误地归类为适合做管理者,即使性别并不是模型的标准之一。总而言之,原因是模型的选择标准很可能与性别和管理能力相关,因此模型对女性和男性的 "错误 "程度不同。

如何正确对待它

由于上述原因(以及其他原因),我们需要特别注意基于人工智能的模型的局限性,并监测其在人口群体中的应用。这对人力资源部门尤其重要,因为与一般的人工智能应用形成鲜明对比的是,组织用来训练人工智能工具的数据很可能反映了人力资源部门目前正在努力纠正的不平衡现象。因此,企业在创建和监测人工智能应用时,应密切关注数据中的代表人物。更重要的是,他们应该看看训练数据的构成如何在一个方向上扭曲人工智能的建议。

在这方面,有一个工具可以提供帮助,那就是偏见仪表板,它可以单独分析人力资本分析工具在不同群体(如种族)中的表现,从而及早发现可能的偏见。这个仪表盘突出了不同群体的统计性能和影响。例如,对于支持招聘的应用程序,仪表板可以总结出模型的准确性和错误的类型,以及每个群体中获得面试机会并最终被录用的比例。

除了监测性能指标外,管理者还可以明确地测试偏见。一种方法是在训练基于人工智能的工具时排除一个特定的人口统计学变量(例如,性别),但在随后的结果分析中明确包括该变量。如果性别与结果高度相关--例如,如果一种性别被建议加薪的可能性过大--这是一个迹象,表明人工智能工具可能以一种不可取的方式隐含地纳入了性别。这可能是该工具不成比例地将女性确定为加薪的候选人,因为在你的组织中,女性往往报酬不足。如果是这样,人工智能工具正在帮助你解决一个重要问题。但也可能是人工智能工具加强了现有的偏见。需要进一步调查以确定根本原因。

重要的是要记住,没有一个模型是完整的。例如,一个员工的个性很可能会影响他们在你公司的成功,而不一定会显示在你关于该员工的人力资源数据中。人力资源专家需要对这些可能性保持警惕,并尽可能地将其记录下来。虽然算法可以帮助解释过去的数据和识别模式,但人力资本分析仍然是一个以人为本的领域,在许多情况下,特别是困难的情况下,最终的决定仍然要由人类来做,这反映在目前流行的短语 "人在环形分析 "中。

为了有效,这些人需要意识到机器学习的偏见和模型的局限性,实时监控模型的部署,并准备采取必要的纠正措施。一个有偏见意识的过程将人类的判断纳入每个分析步骤,包括意识到人工智能工具如何通过反馈回路放大偏见。一个具体的例子是,当招聘决定是基于 "文化契合度 "时,每个招聘周期都会给组织带来更多类似的员工,这反过来又使文化契合度变得更窄,有可能违背多样性目标。在这种情况下,除了完善人工智能工具之外,可能还需要扩大招聘标准。

人力资本分析,特别是基于人工智能的分析,是一个令人难以置信的强大工具,已经成为现代人力资源不可或缺的工具。但量化模型的目的是协助,而不是取代人类的判断。为了最大限度地利用人工智能和其他人力资本分析工具,你将需要持续监测应用程序如何实时工作,哪些显性和隐性标准被用来做决定和训练工具,以及结果是否以意想不到的方式对不同群体产生不同影响。通过对数据、模型、决策和软件供应商提出正确的问题,管理者可以成功地利用人力资本分析的力量来建立未来的高成就、公平的工作场所。

来自HBR ,作者 David Gaddis Ross David Anderson Margrét V. Bjarnadóttir

-

Workplace

Workplace

VC与 Facebook 接洽,想分拆办公协作平台Workplace ,但 Facebook视其为战略资产, 拒绝了提议

Workplace——最初是作为 Facebook 的一个版本构建的,供员工相互交流的应用程序——现在拥有超过 700 万用户,为自己开辟了一个应用程序的地方,帮助公司使用基本相同的工具进行内部沟通,这些工具已被证明具有粘性在他们与朋友和家人的生活中。事实证明,这种牵引力一直在给 Workplace 带来另一种关注。

我们了解到,企业投资者与 Facebook(在更名为 Meta 之前)接洽,为社交网络提供了一个提议:剥离该组织,他们说,让我们作为一家初创公司支持它。据消息人士称,一笔交易会将一个新独立的 Workplace 视为“独角兽”(至少 10 亿美元)。

一位消息人士告诉我们,对话没有取得进展,主要是因为 Facebook(以及现在的 Meta)将 Workplace 视为“战略资产”——不是因为 Workplace 产生的销售额接近 Meta 在 Facebook 和 Instagram 等平台上通过广告赚取的数十亿美元,而是更重要的是向市场展示更加多样化的面孔。对于监管机构来说,这表明 Facebook/Meta 不仅仅是一个过于强大的社交网络;对于组织而言,Facebook 可以为他们做的不仅仅是销售广告。

“这有助于让 Facebook [和 Meta] 看起来像个成年人,”消息人士说。

Meta 和 Workplace 的发言人表示,他们没有什么可分享的,并拒绝对本文发表评论。目前尚不清楚哪些投资者参与其中,但一位消息人士称,他们属于那些专注于后期增长轮投资的投资者,目的是专门为企业机会注入资金。

他们去年为一个分拆的 Workplace 提供资金的方法是在后期和私募股权投资者正在(并且仍然)加大活动以抢购大型成熟科技企业的时候出现的。据报道,Thoma Bravo 去年筹集了 350 亿美元,以利用该领域的更多收购机会(为此,它一直在进行大量投资和收购)。彭博社估计,2021 年私募股权收购总额约为 800 亿美元,与 2020 年相比增长 140% 以上。

这一步伐今年似乎并未放缓,其中包括私募股权公司接近更大的技术巨头以剥离业务,因为他们希望从较少的核心、可能无利可图或更普遍滞后的资产中精简和实现更多资本。就在今天早些时候,Francisco Partners宣布以约 10 亿美元收购 IBM 的 Watson Health 业务。

建立 SaaS 滩头阵地

对于 Meta,剥离 Workplace 的方法突出了两个方面的发展。

在企业方面,有人呼吁拆分公司——本月早些时候在这方面的最新进展是,法院裁定美国联邦贸易委员会可以提起诉讼,要求出售WhatsApp 和 Instagram以及据报道,对其 VR 部门的反垄断违规行为进行了单独调查。一些投资者和股东将这种情况视为机会,Meta 可能越来越需要权衡这种紧张局势,因为它证明持有其各种资产是合理的。

对于 Workplace,该部门在过去几个月中发现自己处于一个关键的十字路口。

一方面,Workplace 出现了许多重要的离职,其中包括不少于其前两名高管,Karandeep Anand(本月被任命为 Brex 的首席产品官)和 Julien Codorniou,后者离职成为伦敦 VC Felix 的合伙人资本。其他一些人也离开了大楼,前往其他地方寻找其他机会。

慈善地向我描述了其中一些运动背后的逻辑,不是对 Meta 面临的糟糕公关的回应,而是自然的减员:一群人聚集在一起从头开始创建和构建 Workplace,现在它是一个更成熟的产品,具有更清晰的重点,现在是新人进入下一阶段工作的合适时机。(我个人的看法:Workplace 的新负责人 Ujjwal Singh 感觉现在是领导它的可靠选择。)

但即使有报道称员工可能会因为 Meta 不断受到舆论法庭的抨击而感到疲惫,但 Workplace 也未能幸免于难。据我们了解,Workplace 与一家大型连锁餐厅签署了一笔巨额协议,这是最大的连锁餐厅之一,但由于坏消息周期和“声誉问题”,客户要求在去年秋天推迟宣布。

“这种事不会发生在其他 SaaS 公司身上,”一位人士说。这似乎是支持将 Workplace 与其父级隔开的一个论点,也许是通过衍生的方式,但 Meta 似乎有相反的想法。

自首次作为产品推出以来,Workplace 多年来实际上发生了很大变化。

Workplace 最初是作为Facebook 的“工作”版本创建的——扩展了 Facebook 员工已经使用 Facebook在私人团体中相互交流的方式——Workplace 的推出是为了应对 Slack 和其他工作场所聊天应用程序的兴起。Workplace 的逻辑是它具有天然优势,因为数十亿人已经在使用 Facebook。而且,推出针对不同类型用户的新服务,采用不同的商业模式——付费而非广告支持——为公司打开了新业务可能性的大门。

即使 Workplace 的重点发生了变化,这在很大程度上仍然是公司的战略。最初,它引入了一些与其他针对知识工作者的工作场所生产力工具的集成,这是为了更直接地与 Slack 和 Teams 等公司竞争而做出的更大努力的一部分。但随着时间的推移,几乎是在偶然的情况下,Workplace 发现了一群主要通过移动设备与雇主交流的无办公桌工人。因此,Workplace 的最佳选择是同时成为两类员工的通信应用程序。

一位消息人士说:“我们意识到,与其要求我们的客户在 Teams 或 Slack 和 Workplace 之间进行选择,你可以两者兼而有之。” “其他人可以为知识工作者处理实时消息通信,而 Workplace 最适合每个人使用异步。”

这似乎是 Workplace 现在战略的指导思想,它最近将Microsoft Teams 的更多功能集成到其平台中以补充 Workplace,并于昨天宣布与 WhatsApp 的新集成,这已经在一线团队中非常受欢迎,现在将成为 Workplace 通信的更正式界面。据我们了解,涉及 Meta 的 VR 业务和 Portal 的更紧密的集成和服务也在进行中。

虽然该公司要到今年晚些时候才会更新用户数量,但一位消息人士告诉我们,Workplace 现在有近 1000 万用户,主要客户包括一些世界上最大的雇主,如沃尔玛、阿斯利康等.

虽然 Workplace 过去曾作为独立产品出售给客户,但“我认为它不会再作为独立应用程序出售,”一位消息人士说。

相反,它将成为套件的一部分,例如销售业务消息和 Workplace,或与 Facebook 登录功能一起,打开 Meta 如何与这些业务互动的前景。(向企业进行更广泛的销售宣传也可能是其收购 CRM 初创公司 Kustomer的动机,尽管该交易尚未完成。)

到目前为止,Meta 还没有准备好与 Workplace 分开,似乎现在将其定位为包含更大 SaaS 业务的滩头阵地的一部分。它能否像一家独立公司一样动员起来以实现这一机会?如果没有,风投们可能仍在等待。

-

Workplace

Workplace

数字革命:人力资源技术如何永远改变工作场所

当全国各地的办公室关闭时,员工们收拾好他们的工作,很可能预计在一两个星期后会回来。但是,一年半多以后,这些员工中的很多人仍然没有回到那些桌子上,我们的集体工作世界现在几乎完全存在于我们的电脑屏幕中。

根据芝加哥大学贝克尔-弗里德曼经济研究所(Becker Friedman Institute for Economics at the University of Chicago)2020年的一份白皮书,在这场大流行的初期,估计美国37%的工作可以远程完成。但在当时,只有7%的美国工人曾经在完全远程的环境中工作过。

当然,今天,这一切都改变了。根据盖洛普公司最近的一项调查,现在,52%的工人已经在家里完成了他们的工作。这一统计数字预计只会增长,这是因为劳动力有能力接受技术工具,使办公室能够轻松过渡到虚拟世界。

在整个COVID-19危机中,工人们对这种转变的反应基本上是积极的:根据员工成功软件网站Quantum Workplace的数据,82%的远程员工同意他们拥有与经理和团队保持联系所需的技术。而在生产力方面,78%的远程员工说他们在过去20个月里有很高的参与度,而现场员工只有72%。

这种被迫采用的技术已经触及工作场所的每个角落,但它已经彻底改变了公司的某些学科和部门,改变了我们参与、雇用和支持劳动力的方式。现在,已经没有回头路了。

科技如何使人力资源更人性化

对于人力资源高管来说,已经为技术驱动的革命奠定了基础--但COVID为领导者提供了真正拥抱这些工具的动力。

"技术解决方案平台Virgin Pulse的首席执行官Chris Michalak说:"对基于云的ERP(企业资源规划)平台的推动已经到位了。"从招聘和人才招聘的角度来看,技术的使用,管理福利的能力已经存在。[大流行病为人力资源部门使用技术的方式提供了一个加速。

根据Sapient Insights Group最近的人力资源系统调查,在2022年,15%的组织计划将其在传统人力资源技术上的支出平均减少23%。但另外28%的企业计划增加对更具前瞻性的非传统人力资源技术的投资,如远程工作工具和基础设施。

35%的受访者表示,在COVID消退后,他们至少有一半的劳动力将继续进行远程工作。从Zoom和Slack等应用程序到人工智能和社交媒体在招聘中的使用,技术使工作场所在整个大流行病中保持稳定成为可能。现在,越来越多的公司选择留在远程,这将取决于人力资源技术的发展,以支持新的需求。

Michalak说:"接下来的挑战是,人力资源部门如何在一个高度虚拟的世界里管理人们的入职过程,以及我们如何以更集中的方式管理福利,"。"能够找出利用虚拟技术连接人们的方法,同时确保你为加入你的公司的新团队成员创造一个有凝聚力的文化体验--我认为这将是未来的绝对关键。"

Michalak说,在过去,公司依靠现场医疗诊所和福利论坛等资源来与员工接触,但这种情况将逐渐消失。公司将更多地依赖技术来向人们介绍他们的福利,并确保他们了解他们可以利用什么来管理他们的身体和心理健康需求。

"他说:"这将导致人力资源部门更高度地关注,哪里是纳入技术的正确位置。"以及如何使人们能够真正有效地使用它。"

招聘工作变得聪明,DEI工作变得真实

在数字环境中,吸引和保留多样化人才的定义已经发生了变化,技术的扩散创造了更多的机会,使我们在招聘的方式--以及谁--方面有意识地进行思考。

由大流行病引起的劳动力短缺凸显了长期以来隐藏在众目睽睽之下的最严酷的招聘现实之一:求职者库中有大量未开发的少数民族人才,而这些候选人在传统的招聘方案中被系统地忽略了。但技术可以帮助弥补这一差距。

Randstad Sourceright的多样性和包容性副总裁Vaishali Shah说,这些工具一直都有。大流行病推动了企业接受它们。

"她说:"大流行病使它成为一种没有选择的情况。"以前,公司选择[少用技术]或选择使用更多。现在,就好像你必须使用技术,你不能忽视技术告诉你的东西。如果有数据摆在你面前,它就不再是意见,不再是辩论。你可以真正看到你的流程在推动什么。"

尽管公司范围内的DEI倡议有所上升,但招聘中的歧视仍然猖獗--根据加州大学伯克利分校和芝加哥大学的经济学家最近的一项研究,平均而言,被认为是 "黑人名字 "的候选人的申请与被认为是 "白人名字 "的类似申请人相比,得到的回电更少。随着越来越多的公司寻求简化他们的招聘过程,他们掌握的许多工具也旨在解决歧视问题。

"Shah说:"技术已经帮助消除了面试中的许多人为偏见。

例如,LinkedIn推出了一个 "盲目简历 "功能,隐藏申请人的姓名和面孔,以努力照顾代表性不足的社区。随着在家工作被广泛接受,越来越多的公司向残疾或患有慢性疾病的人才开放了机会,这些人以前被排除在物理工作场所之外。

"[虚拟招聘]使残疾人更容易了解工作,与公司接触,与职位匹配,然后真正被选中,"Shah说,并指出,人类的偏见往往可以被消除。"技术只是把[简历]中最相关的部分拉出来。"

根据Glassdoor最近的一项研究,86%的人力资源专业人员觉得招聘已经变得更像市场营销。他们没有错:根据CareerArc的《2021年招聘的未来》,82%的求职者在申请前会考虑一个公司的品牌和声誉。而EBN的母公司Arizent最近的一份报告发现,64%的员工对为一家有证据表明缺乏多样性的公司工作的兴趣不大。

这意味着,招聘--以及利用技术手段公平地进行招聘--仍将是雇主的首要任务。

心理健康有了自己的工具箱

当大流行病首次袭来时,与人力资源技术和招聘不同的是,技术实际上在一定程度上是员工心理健康广泛下降的原因。

疾病预防控制中心指出,恐惧、不确定性和孤立是导致40%的成年人与心理健康斗争的主要因素。但是,随着人们的生活进入网络,家也变成了办公室,工作和生活之间的界限危险地接近消失,员工们努力应对。

"虚拟时间管理平台Clockwise的社区负责人安娜-迪尔蒙-科尼克(Anna Dearmon Kornick)说:"我们的工作方式已经发生了一切变化--我们如何对待工作,我们如何看待福利,我们如何看待为我们的员工服务。"从心理健康的角度来看,这是关于意识到工作与生活的平衡是什么样子,以及这对你的团队成员意味着什么。"

自大流行病发生以来,花在会议上的时间增加了约29%。根据咨询服务公司德勤最近的一项调查,77%的员工说他们经历过职业倦怠,91%的人说有无法控制的压力或挫折对他们的工作质量有负面影响。

因此,使用雇主赞助的远程医疗服务来管理心理健康的人数激增了154%。包括星巴克和惠普在内的公司已经扩大了他们的福利,包括免费治疗、冥想和健康应用程序,甚至可以进入一个虚拟菜园。截至2021年6月,心理健康平台Ginger与COVID之前的水平相比,获得治疗和精神病学服务的用户增加了410%。

"我们不得不比以前更有意地进行沟通和协作。"迪尔蒙-科尼克说,允许员工安排顺时针的会议,这以及下的时间。"确保我们的团队成员知道他们可以指定他们的工作时间和指定他们的会议时间,以确保他们有受保护的时间来做工作,这有助于保持对潜在的倦怠的关注。"

Clockwise还为经理们提供了关于他们的员工如何花费时间的洞察力。如果一个员工的会议时间过长,而自己的时间不足,管理人员就会被提醒,并有机会在问题失控之前伸出援手。

"没有一个健康的劳动力,我们就无法完成工作,"迪尔蒙-科尼克说。"保护员工心理健康的最好方法是决定:你的弹性工作政策是什么样子的?在沟通该政策时要明确,以使该未来能够成功--不仅是对公司,而且是对团队成员的成功。"

The digital revolution: How HR tech has changed the workplace forever

作者:Paola Peralta

-

Workplace

Workplace

【美国】西雅图的一家职场分析平台Syndio今天宣布获得了5000万美元的C轮融资

领先的职场分析平台Syndio今天宣布获得了5000万美元的C轮融资,该平台的使命是确保公平和公正成为每个就业决定的一部分。Emerson Collective和Bessemer Venture Partners领投了本轮融资,Voyager Capital也有追加投资。这是Emerson和Voyager对Syndio的第三次投资,也是Bessemer的第二次投资。Syndio总共已经筹集了8300万美元。

Syndio提供唯一由数据科学驱动的软件,使公司能够分析和解决因性别、种族或其他比较而产生的薪酬公平问题,并随着时间的推移进行监测。Syndio的平台被包括Nerdwallet、Nordstrom、Salesforce和General Mills在内的200家公司用于衡量美国260多万员工的薪酬。

新的资本是在Syndio发展的关键时刻出现的,将有助于资助新产品的开发和招聘工作,以建立一个更大的平台,解决工作场所的公平问题,并满足公司不断增长的客户需求。在过去的两年里,该公司的年度经常性收入每年增长三倍,预计在2022年将实现类似的增长率。

在过去的一年里,Syndio已经看到了客户使用案例的转变。与以往相比,客户正在采取积极主动的方法,更经常地在更广泛的范围内分析工作场所的公平。在2020年5月乔治-弗洛伊德被谋杀之前,只有50%的Syndio客户分析了种族。今天,几乎所有的人(98%)都同时分析了性别和种族。近三分之一(30%)的Syndio客户已经开始每季度或每年两次分析薪酬。数十家财富500强公司使用Syndio的软件,因为它可以降低法律风险,帮助吸引和留住顶级人才,而且它使公司更容易理解为什么他们可能没有公平地支付员工,以及如何解决这个问题。

"Emerson Collective的风险投资总经理Fern Mandelbaum说:"我们对推进公平的承诺体现在这样一个信念上:复杂的、系统性的失败需要不同的、现代的方法。"Syndio有一个简单的目标:使薪酬平等成为所有员工的现实。"

"Syndio首席执行官Maria Colacurcio说:"每天,来自员工、投资者和政府的压力都在增加,要求缩小持续存在的工作场所差距,以确保公司在21世纪经济中取得持久成功。"忽视这些内部和外部力量的代价是显而易见的:品牌力量的损失,因员工流失和效率低下而增加的资本成本,以及士气的崩溃。有史以来第一次,技术的存在可以满足这一时刻,使工作场所的公平成为一个基本的领导原则。这些公平分析技术是由Syndio定义和开创的,我们很高兴得到Bessemer和Emerson的持续合作,推动我们的发展。"

"在Databricks,我们对数据和寻找使用数据的最佳方式的痴迷是可以理解的。几年前,当我们对薪酬平等和缩小薪酬差距做出承诺时,我们开始寻找最好的、最以数据为中心的、最无偏见的薪酬平等分析方法。Syndio是显而易见的选择,自从去年开始使用该软件以来,我们对我们的薪酬公平及其基本因素有了深刻的了解。Databricks首席人事官Amy Reichanadter说:"该公司不仅提供了该领域最好的软件,而且在我们的薪酬平等之旅中还提供了与专家的接触和指导。

Syndio的高管团队中有一半人是女性,七分之二的人来自历史上被排斥的群体。Syndio是由薪酬平等律师和麻省理工学院博士Zev Eigen于2017年共同创立的,他担任公司的首席科学官。Colacurcio于2018年加入,担任首席执行官。在此之前,她共同创办了Smartsheet.com,一个为各种规模的公司提供的工作协作工具。她在建立具有强大核心价值的成功公司方面有着良好的记录,这些公司致力于为其员工和客户服务。

关于Syndio

Syndio的使命是使雇主能够消除因性别、种族和民族而产生的非法薪酬差异,并持续做出一致和公平的薪酬决策。Syndio的客户大大降低了法律风险,节省了数百万的持续补救费用,并创造了积极的品牌声誉,这有助于在企业的各个层面吸引和保留顶尖人才。随着时间的推移,我们帮助企业缩小薪酬差距。Syndio很荣幸能与包括Salesforce、Nordstrom、General Mills、Nerdwallet、Match Group等在内的品牌合作,这些品牌在公平方面处于领先地位,为工作场所的公平设定了标准。

-

Workplace

Workplace

Facebook企业内部沟通平台Workplace付费用户达700万

北京时间5月5日上午消息,Facebook周二宣布,其企业通讯软件Workplace的付费用户已经达到700万,比去年5月的500万增加了40%。

Workplace是一款企业软件,企业客户可以将其用作与员工沟通的内部社交网络。这项服务于2016年推出,Spotify和星巴克等公司都是这款应用的客户。

Workplace远远落后于它的主要竞争对手。微软上个月宣布,其Teams产品现在拥有超过1.45亿的日活跃用户,比2020年4月的7500万增加了93%以上。Slack虽然不再公布用户数据,但该公司在2019年9月称每日活跃用户为1200万,该公司宣布,其付费用户数量从2020年3月的11万增加到2021年3月的15.6万,增幅近42%。

Workplace的用户增长对公司的财务业绩也无关紧要。Facebook没有公布Workplace对其收入的贡献,但这款服务与Oculus和Portal硬件设备一起计入了该公司财务业绩中的“其他”业务标签。2021年第一季度,“其他”占Facebook营收的2.8%,其余都来自广告。

Facebook首席执行官马克·扎克伯格(Mark Zuckerberg)周二在Facebook上发帖称:“我们把Workplace作为Facebook的内部版本来运营我们自己的公司,它非常有用,然后我们决定开始让其他机构也使用它。”

Workplace副总裁朱利安·科多尼欧(Julien Codorniou)对媒体表示,他部门的目标是让Facebook的数十亿用户使用商业it软件。Facebook的想法是,通过构建服务,使用人们已经从Facebook的消费者应用程序中熟悉的功能来实现这一点。

科多尼欧表示:“许多人以前从未接触过它,因为它太复杂或太昂贵。你可以把Workplace拿给我妈妈,她马上就会明白如何使用它。”

周二,Facebook还宣布了一系列针对Workplace的新功能。这包括一个新的现场问答体验,与微软365和谷歌的G Suite日历应用程序的集成,以及一系列新的多元化功能,包括不同肤色的表情符号,以及允许用户向同事们介绍自己名字正确发音的功能。

-

Workplace

Workplace

【爱尔兰】员工体验创业公司Workvivo

当大多数员工都在远程工作时,保持公司文化对每个组织来说都是一个挑战--无论大小。

即使在COVID出现之前,这也是一个问题。但随着这么多员工在家工作,这个问题变得更加严重。雇主必须小心翼翼地让员工不感到与公司其他部门脱节和孤立,并保持高昂的士气。

进入Workvivo,这是一家总部位于爱尔兰科克的员工体验创业公司,由Zoom创始人Eric Yuan和Tiger Global支持,在过去一年中稳步增长超过200%。

该公司服务的企业规模从100名员工到超过10万名员工,拥有超过50万用户。据首席执行官兼联合创始人约翰-古尔丁(John Goulding)介绍,自推出以来,它的留存率达到了100%。客户包括Telus International、Kentech、A+E Networks和Seneca Gaming Corp.等。

Workvivo由Goulding和Joe Lennon于2017年创立,于2018年年中推出了员工沟通平台,目标是帮助企业创建 "引人入胜的虚拟工作场所",取代过时的内部网。

"我们不是关于实时的,我们更多的是异步沟通,"Goulding解释说。"我们有很多事务性的工具,通常承载着更大的信息,关于公司正在发生的事情以及正在发生的积极事情。我们更注重人与人之间的联系。"

利用Workvivo,公司可以通过社交方式提供CEO最新的信息、对员工的认可--"更多的是塑造文化的东西,这样工人就可以真实地感受到组织中发生的事情。" 它在第二季度推出了播客,在第四季度推出了直播。

2019年,Workvivo向Zoom的袁先生展示了它的产品,袁先生最终成为该公司的第一批投资者之一。

然后在2020年5月,公司在A轮融资中筹集了1600万美元,由以大型成长型融资闻名的老虎环球领投。

Workvivo早在COVID-19大流行之前就已经建立起来了,它在去年找到了一个合适的位置。而对其产品的需求也反映了这一点。

"自从COVID来袭后,增长速度加快了,"Goulding告诉TechCrunch。"我们在收入、用户、客户和员工方面的规模比流行病开始前增长了三倍。"

他说,这家SaaS运营商每年的交易额从5万美元到接近100万美元不等。Workvivo总部位于欧洲,在82个国家开展业务。但其大部分客户位于美国,其80%的增长来自美国。

这家初创公司于2020年初在旧金山开设了一个办事处,它正在扩大该办事处。目前,其65人的团队中有30%的人在美国,还有一些人在其他州远程工作。

虽然Workvivo不愿透露硬性的营收数据,但Goulding只表示,考虑到公司 "处于非常强大的资本状况",它不会很快寻求额外的资金。

为了解决同样的问题,微软上个月推出了新的 "员工体验平台 "Viva,或者用非营销术语来说,它对大多数大公司倾向于为员工提供的内部网网站的新看法。微软此举,除了Workvivo之外,还在与Facebook的Workplace平台和Jive等公司进行竞争。

尽管这个领域越来越拥挤,但Workvivo认为自己比竞争对手更有优势,因为它与Slack和Zoom整合得很好。

"我们在生态系统中与Slack和Zoom并肩而坐,"Goulding说。"有Zoom、Slack和我们。"

Slack是实时消息和眼前发生的事情,而Zoom是实时视频和 "关于当下",他说。

在Goulding看来,微软的新产品还没有经过验证,是一种反动的做法。

"很明显,数字工作场所的中心有一场战斗要打赢,"他说。"我们是来捕捉组织的心跳,而不是脉搏。"

作者:Mary Ann Azevedo 来自 TC

-

Workplace

Workplace

【海外】解读:创新的HRTech厂商涌现如CultureAmp、Valamis、Lattice、Hitch、Eskalera等。

编者注:强烈推荐HRTech领域的创业者们读读,这篇总结了9个重要的创新产品和方向,其中基本HRTechChina都有过相关报道,JoshBersin基于对未来趋势的判断,推荐了这几个方向,虽然有几个跟我们中国国情不符合(多样性包容性,性骚扰培训等)但绝大多数的都与我们有极大参考作用!必读之一!

我想强调一下这个极其重要的HRTech领域中的一些重要创新。

首先介绍 Amplify:对话式微学习by Culture Amp

首先宣布的是Culture Amp’s 新产品Amplify,这是一个管理和团队发展工具,我称之为对话式微学习。这套系统建立在Zugata(2019年1月收购)的愿景上,给管理者和员工提供一套小的微学习信息,组织成一个端到端的学习计划。用户每隔一天左右就会收到固定的消息,它们与互动和作业一起,带你完成一个学习计划。

这是一个非常创新的系统,它利用了你的邮件关系和同行给你的360反馈。第一个项目专注于基于技能的教练,它旨在帮助经理人提高团队的应变能力和生产力。它主要是教你如何一步步成为一名优秀的教练。

我和Culture Amp的试点客户之一Ellucian聊了聊,他们很激动。不仅是这个项目亟需,而且他们的员工也很喜欢这个项目,因为它很容易使用。

顺便说一下,Culture Amp是市场上最成功的反馈和调查公司之一,基于其文化优先的理念和奇妙的软件设计,建立了庞大的客户。自公司成立以来,我就一直是它的粉丝,看到公司从反馈发展到员工发展市场,我感到非常奇妙。

2、Valamis:取代传统系统的企业学习平台

我想提到的第二家公司是Valamis,这是一家了不起的芬兰公司,它建立了我见过的最先进的端到端学习平台之一。Valamis不仅是一个令人难以置信的创新和高质量的组织,他们的客户都是世界上最苛刻的客户(贝恩、美国国家航空航天局、波音公司、瑞典教育部等)。

Valamis基本上已经建立了每个公司梦想的现代端到端学习平台。这意味着先进的LXP、完整的LMS、学习记录存储、开发平台、内容管理,以及我见过的最先进的端到端学习数据平台之一。而Valamis帮助企业进行共情图谱、学习历程和基于角色的学习--这就是Capability Academies的意义所在。

学习技术市场上一个有趣的问题是,现在的学习数据洒满了公司的各个角落。有些数据在LMS中,有些在LXP中,有些在内容平台中,有些在评估和其他人才系统中。当所有这些数据没有整合起来的时候,几乎不可能真正提供有意义的学习体验。好在Valamis解决了这个问题,他们的产品给我留下了非常深刻的印象。

这家公司现在刚刚进入美国市场,公司的芬兰传统和文化令人耳目一新,以客户为中心,而且质量很高。如果你想了解Valamis,请阅读Sisu--芬兰语中的力量、耐力、韧性和关怀的意思。我们现在都在寻找Sisu,如果你想看到它的行动,我鼓励你与Valamis交谈。

我认为他们将在未来几年成为更大的参与者之一。

3、Grow by Lattice: 绩效管理的开发解决方案

学习和绩效管理的历史有点丑陋。多年前,像Cornerstone、Saba和SumTotal这样的厂商率先提出了将学习管理和绩效管理合并的想法。但他们的做法很落后:他们建立了复杂的学习平台,然后在上面添加了难以使用的绩效管理应用。企业之所以购买这些产品,是因为我们正处于 "一体化人才管理 "的时代,但最终的结果是,大多数绩效管理程序都太复杂,难以使用。

与此同时,像BetterWorks、15Five、HighGround和Lattice这样敏捷、易用的供应商诞生了--它们都在疯狂地成长。为什么它们会变得如此流行?因为公司非常需要团队管理工具,帮助团队设定目标,在项目上进行协作,互相发送嘉奖,并跟踪谁应该做什么。我喜欢称它为 "项目责任制软件",因为它能帮助你完成项目,帮助你决定谁对什么负责。Asana也是这样做的)。

好吧,Lattice,这些厂商中最成功的一家,刚刚带着他们的新产品Grow进入了学习市场。Grow与Amplify(上文)略有不同,因为它专注于与工作相关的发展规划,而不是学习交付。对于所有Lattice的客户来说,他们一直在努力寻找给员工制定发展计划以及目标的方法,Grow是完美的。它易于使用,专为人力资源驱动的发展而设计,并将帮助Lattice发展成为更多的学习解决方案。

4、Hitch:最新的人才市场公司

人才市场产品的市场一直是爆炸性的。现在每个大型企业都在寻找其中的一个,需求量非常大。人才市场是一个将项目(或工作)与内部员工进行匹配的软件平台,帮助员工快速找到公司的发展机会、项目、导师或新角色。这个市场的先驱是Fuel50和Gloat(都是快速发展的厂商),但也有很多新的厂商进入。

这些公司包括Eightfold.ai(我见过的最先进的人工智能驱动的招聘和匹配系统之一)、Avature(是的,Avature现在有一个人才市场的产品)、Pymetrics(从评估方面进入市场)、365Talents(一个先进而成熟的解决方案,在法国很大),以及Degreed、EdCast等新产品。

嗯,其中最有意思的是一家叫Hitch的公司,它是世界上最大的地图公司之一Here Technologies的衍生品。Here Technologies是由一系列德国汽车制造商合并而成,他们拉帮结派,与谷歌争夺地图。他们与诺基亚合并,所以他们是一家拥有2万多名员工的全球性大公司。

随着公司的发展,从传统的根基上发展起来(它最初叫Navteq,成立于1985年),CHRO发现他们需要雇佣更多的承包商,并建立一支更加敏捷的员工队伍,去拍摄、绘制和分析世界各地的地理环境。他们为此搭建了一个平台,然后把这个平台分拆成了一家名为Hitch的软件厂商。

Hitch人才市场

我在Hitch呆过一段时间,它瞬间成为市场上比较成熟和先进的人才市场解决方案之一。我不会在这里赘述所有的细节,但它值得一看,因为这家公司有很多经验,真正让敏捷工作模式取得成功。

5、Eskalera:下一代多样性、包容性和分析平台。

DEI(Diversity, Equity, and Inclusion)现在是一个白热化的市场,公司正在尽一切可能改变招聘、晋升、薪酬和持续的管理行为,以推动包容性、归属感和多样性。我们很快将在这一领域启动一个大规模的研究项目(敬请关注),但与此同时,有一些非常有趣的新供应商值得考虑。

我特别感兴趣的是Eskalera,这是一家由高盛前CHRO运营的公司,它建立了一种非常创新的方式来衡量和分析公司的多样性和包容性实践。Eskalera还是一家年轻的公司,但如果你想为DEI建立一个好的衡量项目,我建议你和他们谈谈。SAP和Workday有许多开箱即用的分析和报告解决方案--Eskalera的解决方案特别独特,因为它能衡量标准人力资源指标中不明显的多样性方面。

顺便说一句,整个分析领域已经爆发了。我建议你看看其他一些有趣的分析厂商,包括ChartHop(一个开创性的组织分析系统,让任何类型的分析都变得非常简单),Workday的新DEI分析,OrgVue,SplashBI,Nakisa和Sisense。 这类 "可视化分析 "系统能够快速提供COVID响应的分析,也可以用于场景规划和工作场所的重新设计。

6 ServiceNow安全职场应用,工作流程的主力军

ServiceNow给我留下了非常深刻的印象(和启发)。这家以IT服务和案例管理起家的公司,今年已经完全重塑了自己,在安全工作场所证明、员工定位和调度、案例和知识管理以及沟通等方面都有广泛的应用。

ServiceNow的最大威力不仅仅是应用和平台,它已经成为全球IT和人力资源服务中心的标准工具集。而是该公司的 "工作流应用开发 "工具,它可以让你建立自己的工作流应用、员工体验,以及各种你所需要的 "回归工作 "的解决方案,帮助人们决定去哪个办公室、坐在哪张桌子上,以及其他无数一年前没有人想到的事情。

ServiceNow在2020年将整个公司的重心转移到了这个领域,并且真的得到了回报。

ServiceNow现在的市值几乎是Workday的两倍。

7 Emtrain,基于情景的多样性和骚扰问题培训,推动变革。

顺便说一下,另一家我非常兴奋的供应商是Emtrain,这是一家培训公司,现在提供一些我见过的最引人注目的基于视频的多样性和骚扰培训。Emtrain不仅建立了一套令人难以置信的丰富的内容,该公司现在让你通过一些非常创新的调查来衡量你自己员工的行为,他们包括在每个培训模块。培训将你暴露在一个困难的情况下,然后问你一组关于你将如何应对的问题。然后,你的员工的反应可以与类似的公司进行对标,你可以立即看到你的问题所在。我认为这是一种非常创新和强大的方式,可以把培训变成真正可操作的解决方案。

在这个市场上有数百家供应商,所以我不声称自己是专家--但Emtrain的方法是独特的,内容也是惊人的。

8 Workplace by Facebook

今天美国国会公布了一份450页的报告,描述了为什么Facebook、谷歌、苹果和亚马逊是垄断企业,它们损害了我们的经济。我仔细读了一下,里面有很多很好的论据。但撇开这些不谈,Facebook现在在人力资源科技领域做了一些很酷的事情。

虽然我不是Facebook的粉丝,但Facebook的新平台Workplace却让人印象深刻。它不仅是我见过的最简单的企业社交协作系统之一(坦白说,我还是觉得Slack不可能用),而且在一线员工、零售和酒店业员工、现场销售和服务团队,甚至保险公司都非常受欢迎。为什么它能成功?它非常容易使用,而且它几乎包含了所有你能要求的功能。

Workplace提供了视频会议和共享、群组和内容共享、学习内容集成,以及各种开放的集成工具,这些工具提供了员工通信、社交识别,坦率地说,任何员工应用都可以通过聊天来传递(这意味着任何东西)。想象一下,您的薪资系统、时间跟踪、日程安排、福利管理、调查--都可以通过 Workplace 的聊天界面访问。我认为这将是一个大问题。(Facebook还提供了一个工作场所安全应用。)

Facebook现在明白人力资源技术和学习技术市场有多大,所以该公司正在迅速招募供应商合作伙伴,并向人力资源经理推销该解决方案。鉴于将其他人力资源应用与Workplace整合起来是多么容易,我认为这里的生态系统可能会变得庞大。而像 "环境会议 "和VR这样的新视频功能将是巨大的。

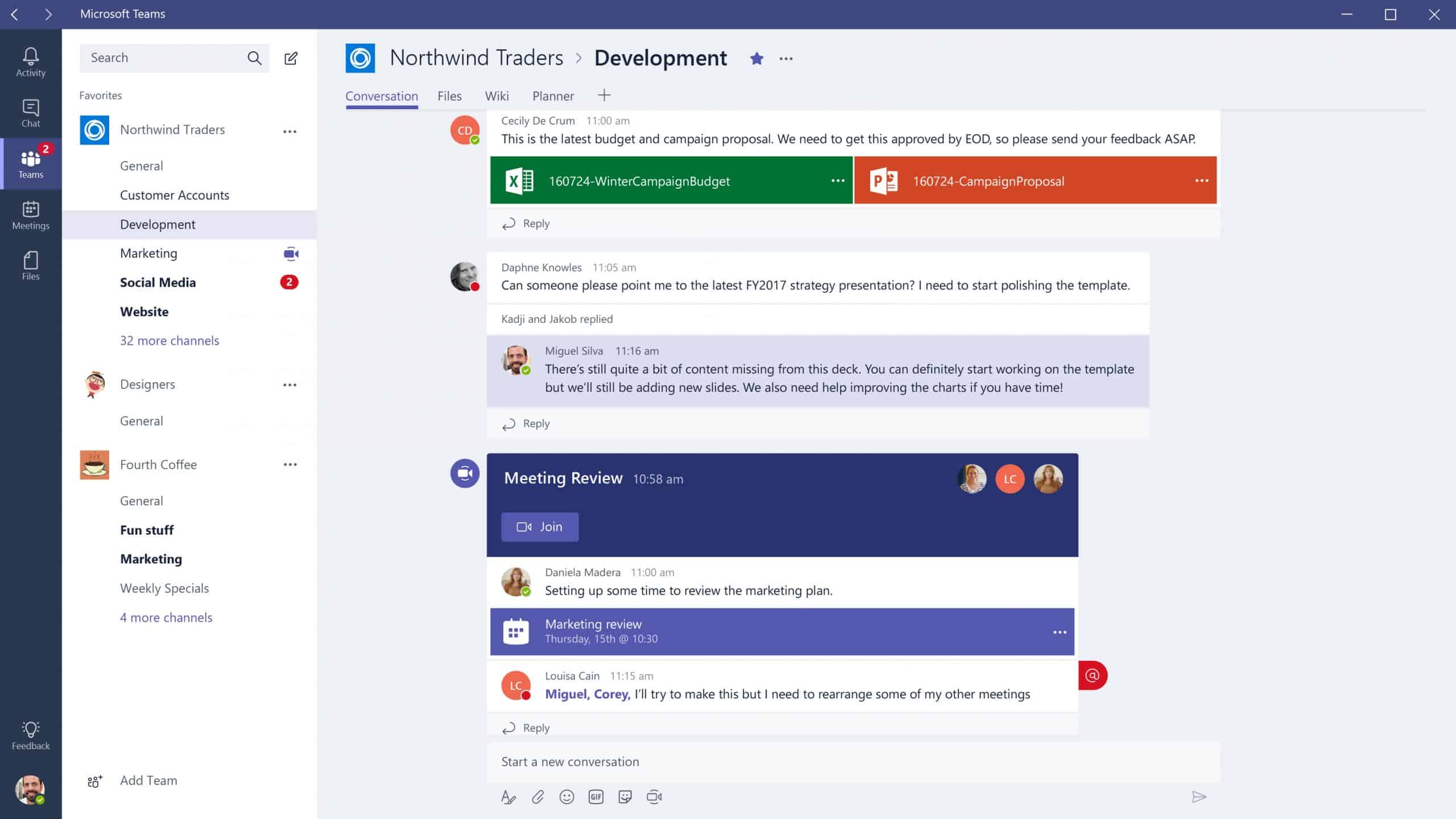

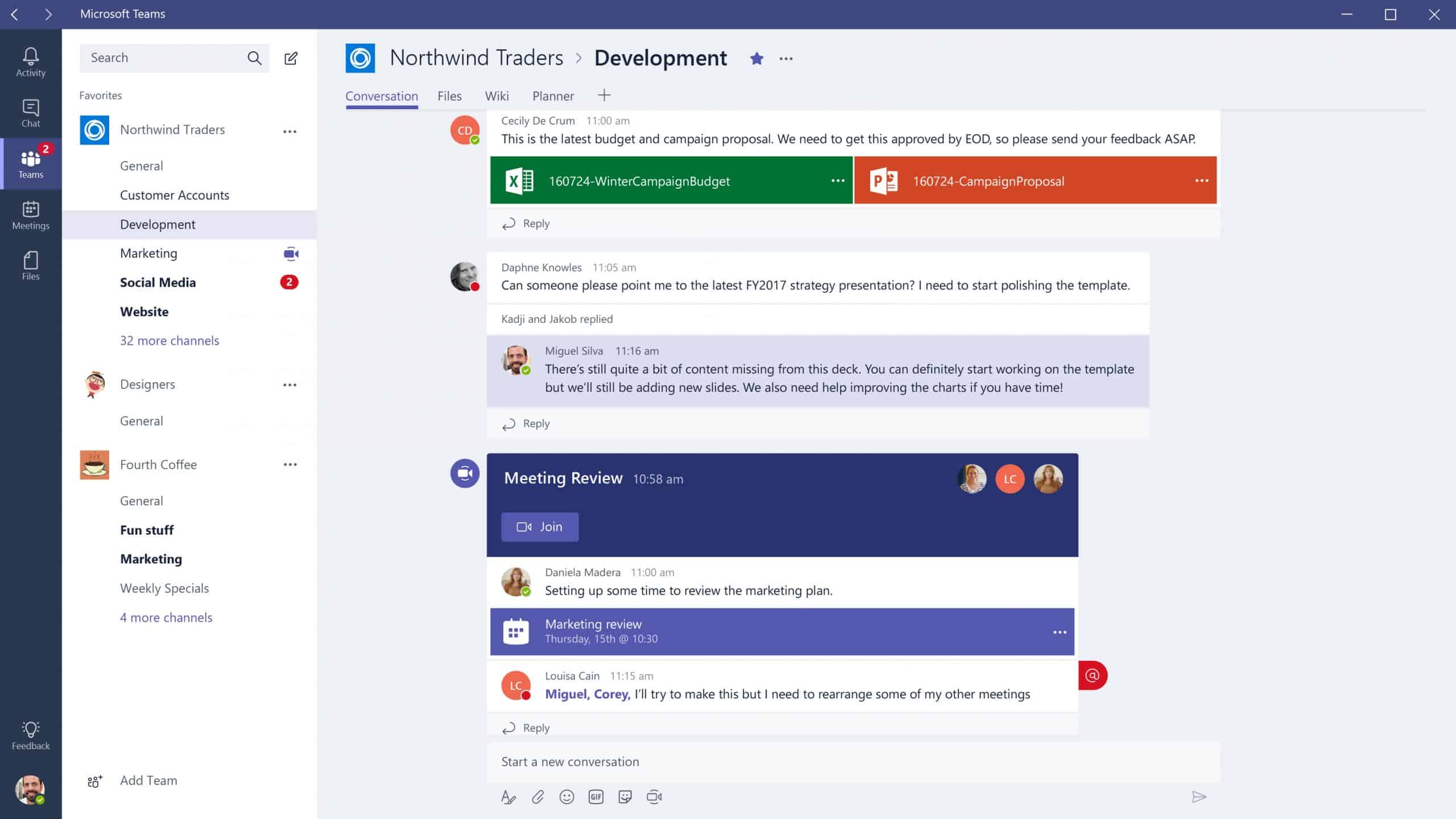

9 Microsoft Teams和Project Cortex

最后,最具颠覆性、可能改变地球的HRTech厂商是微软。在下一季度,微软将在Teams中推出更多的应用,这将包括学习应用、更多的生产力和福利的解决方案,以及很多新的厂商产品。虽然很多软件团队使用Slack进行一般的协作,但我认为Teams是商业应用的赢家,随着时间的推移,它将变成一个与Zoom、Webex和大多数员工沟通应用竞争的平台。

Teams并不是一个真正的 "应用",更像是一个 "平台",它有很多API,各种HR和员工应用都可以很方便地进行插件。我发现,现在几乎所有的大客户都在使用Teams,而基于人工智能的索引技术(名为Project Cortex)也将很快在后台启用,帮助你以闪电般的速度找到文档、人员、专家和合同。该系统在语言翻译、群组管理、大型组织协作方面的各种功能,微软都很了解。

而现在微软的创意也被打开了。Teams有了大型团体会议(体育场式座位)、上下班时间的新功能,我的Office版本还为我安排了 "专注时间",并以一种非常微妙而有用的方式给我健康提示。请记住,Teams是微软365(Office 365)的一部分,其中包括微软搜索、Graph和许多其他文档和工作场所技术,你的公司可能已经拥有。而且与LinkedIn的整合比你想象的要快。我将在几周内写更多关于Teams和微软的文章。

顺便说一下,谷歌新宣布的Workspace(重新命名为G-Suite)显然是对微软的直接回应。虽然我知道谷歌打造的工具很棒,但谷歌在办公生产力方面远远落后于微软(我从你们很多人那里收到很多很多谷歌链接,但大多数时候它们甚至都打不开),所以对于商业用户来说,我认为微软在思维上领先了很多年。但竞争总是好的。

以上由josh bersin 撰写,有删减。

扫一扫 加微信

hrtechchina

扫一扫 加微信

hrtechchina

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

Workplace

扫一扫 加微信

hrtechchina

扫一扫 加微信

hrtechchina